Meta will start labeling AI-generated images posted on its Facebook and Instagram platforms before the 2024 US presidential election.

Nick Clegg, the social media giant’s president of global affairs, announced in a February 6 blog post that images generated by AI tools and published on Facebook, Instagram and Threads will appear with an AI label whenever possible, in all languages supported by these platforms.

The new labels will be applied “in the coming months,” said the former UK Deputy Prime Minister.

“It’s important that we help people know when photorealistic content they’re seeing has been created using AI,” Clegg wrote.

“We’re taking this approach through the next year, during which a number of important elections are taking place around the world. During this time, we expect to learn much more about how people are creating and sharing AI content, what sort of transparency people find most valuable, and how these technologies evolve.”

Invisible Watermarks and AI Image Generation

Meta offers its own AI image generator, Meta AI, which helps people create pictures with simple text prompts.

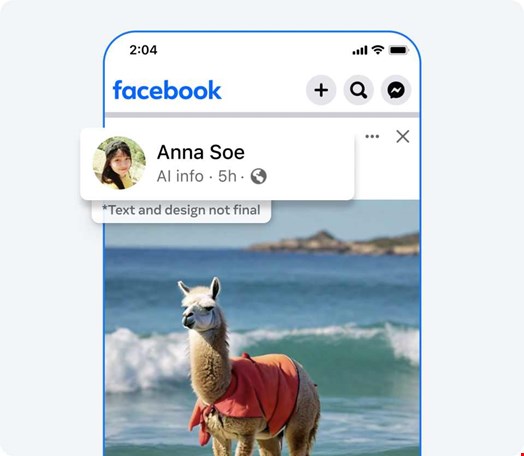

Images created with Meta AI are associated with an ‘Imagined with AI’ label.

“When photorealistic images are created using our Meta AI feature, we do several things to make sure people know AI is involved, including putting visible markers that you can see on the images, and both invisible watermarks and metadata embedded within image files,” Clegg wrote.

Using both invisible watermarking and metadata helps other platforms identify them.

The update on February 6 expands the labeling of AI-generated images to those developed on rival services.

Although Clegg only mentioned images, he added that Meta is “working with industry partners on common technical standards for identifying AI content, including video and audio.”

How Meta Plans to Detect AI Images Generated by Other Services

Meta said it will develop tools to “detect standard indicators” that images are AI-generated. However, no such standards are currently generalized.

This means, Meta will have to choose between developing its own standards or adopting existing ones.

Existing standards include cryptographic credentials for AI-generated images developed through the Content Authenticity Initiative (CAI), an AI watermarking project led by the Coalition for Content Provenance and Authenticity (C2PA).

C2PA is a project of the Joint Development Foundation, a Washington-based non-profit that aims to tackle misinformation and manipulation in the digital age by implementing cryptographic content provenance standards.

Although in its infancy, C2PA counts Adobe, X (Twitter) and The New York Times among its members. Its Content Authenticity Initiative has recently been endorsed by OpenAI.

Read more: OpenAI Announces Plans to Combat Misinformation Amid 2024 Elections

Users Must Label AI-Generated Content, or Face Penalties

In the blog, Clegg also said that Meta will provide a feature allowing users “to disclose when they share AI-generated video or audio” – and will likely make it mandatory to do so.

“We’ll require people to use this disclosure and label tool when they post organic content with a photorealistic video or realistic-sounding audio that was digitally created or altered, and we may apply penalties if they fail to do so,” Clegg explained.

This could indicate that Meta will extend these measures to all digitally created misleading or fake content – not just content generated by AI tools.

Expanding its manipulated content policy to encompass non-AI misleading content was one of Meta’s Oversight Board recommendations in a February 5 blog post addressing the company’s reaction to a fake Biden video.

Read more: Meta's Oversight Board Urges a Policy Change After a Fake Biden Video