Chief information security officers now have a new tool at their disposal to get started with AI securely.

The Open Web Application Security Project (OWASP) released the LLM AI Cybersecurity & Governance Checklist.

This 32-page document is designed to help organizations create a strategy for implementing large language models (LLMs) and mitigate the risks associated with the use of these AI tools.

Sandy Dunn, chief information security (CISO) at Quark IQ and lead author of the checklist, began work on it in August 2023 as an additional supporting resource to OWASP’s Top 10 Security Issues for LLM Applications, published in the summer of 2023.

“I started the first version to address issues I noticed in discussions with other CISOs and cybersecurity practitioners. I saw there was really a lot of confusion on what they needed to think about and where to get started [with AI],” she told Infosecurity.

Steps to Take Before Implementing an LLM Strategy

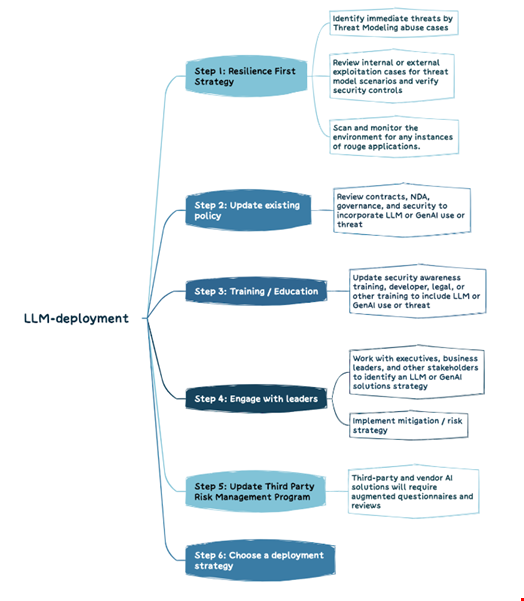

First, the document provides a list of steps to take before deploying an LLM strategy, including reviewing your cyber resilience and security training strategies and engaging with leaders about any AI implementation into your workflow.

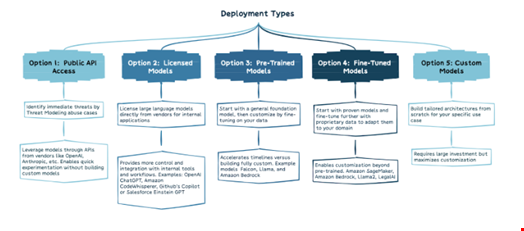

It also provides an overview of five ways organizations can deploy LLMs, depending on their needs.

“The scopes range from leveraging public consumer applications to training proprietary models on private data. Factors like use case sensitivity, capabilities needed, and resources available help determine the right balance of convenience vs. control,” reads the document.

Although this list is far from exhaustive, Dunn said that understanding these five model types provides a practical starting framework for evaluating options.

In a second, larger part, the document outlines a list of 13 things to consider when implementing an LLM use case without adding unnecessary risk to your organization.

These include:

- Business-oriented measures, such as establishing business cases or choosing the right LLM solutions

- Risk management measures, like the need for threat modeling your use case, monitoring AI risk and implementing AI-focused security training and AI red teaming

- Legal, regulatory and policy measures (e.g., establishing compliance requirements, implementing testing, evaluation, verification, and validation processes)

A Milestone for OWASP's Effort to Safeguard AI

Dunn commented: “The four things I really wanted people to take from the checklist were the following:

- Generative AI is a vastly different technology than we’ve tried to protect organizations from before and it will require a completely different mindset to protect an organization;

- AI brings asymmetrical warfare: The adversary has an advantage because of the complexity and breadth of the attack surface. The first thing to address is how fast attackers will be able to use these tools to accelerate their attacks, which we are already seeing;

- Approach AI implementation holistically;

- Use existing legislation to inform your strategy: Even though very few AI laws are currently enforceable, many existing laws like the EU’s General Data Protection Regulation (GDPR) and privacy and security state laws impact your AI business requirements.”

Dunn said she initially included more legal and regulatory information in the first draft of the document, but upon review, the team thought it was too US-centric and decided to keep this part high-level.

“I also saw that as something that fits more appropriately in the OWASP AI Exchange work,” she added.

The OWASP AI Exchange is a platform introduced in 2023 by the OWASP Foundation to be the collaboration hub for AI security standard alignment.

John Sotiropoulos, a senior security architect at Kainos and part of the core group behind OWASP’s Top 10 for LLMs, said the checklist “represents a milestone for OWASP's effort to safeguard AI.”

“Combined with our work in AI Exchange, and collaboration with standards organizations, vendors and public cybersecurity agencies, the checklist helps OWASP unify AI security advice. Our membership in the US AI Safety Consortium (AISIC) will accelerate this trend."

The OWASP Foundation announced it was joining the US AI Safety Institute in early February 2024.

Read more: AI Safety Summit: OWASP Urges Governments to Agree on AI Security Standards